Neural Network Visualizer (Placeholder)

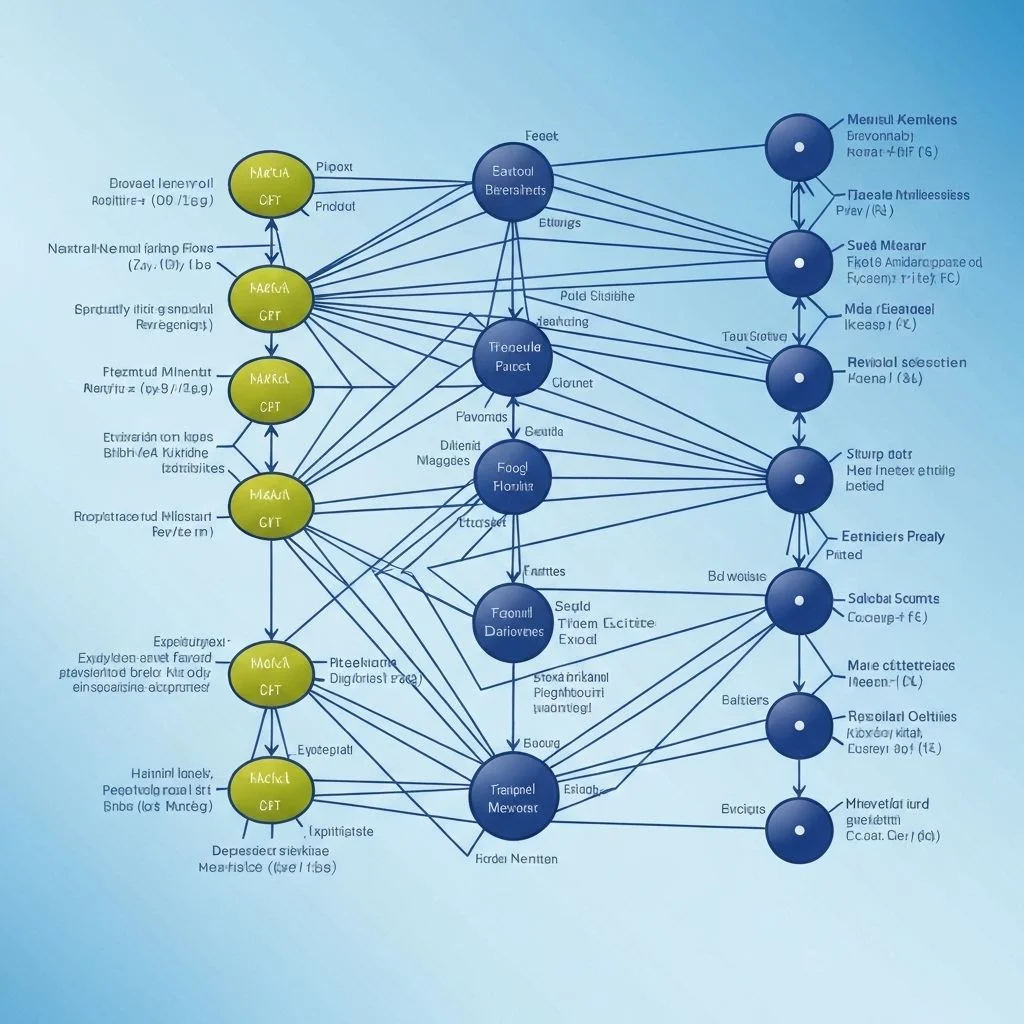

Real-time training insights with animated node graphs and metric overlays.

Interactive tooling to watch neural networks train with animated layers, metrics, and D3-driven visuals.

Quick Stats

Links

Neural Network Visualizer

This is a feature-complete demo build. The playground is live — give it a few seconds to warm up the WebGL context on first load.

This project turns raw model data into an interactive playground where you can watch layers activate, weights adjust, and metrics evolve in real time.

Motivation

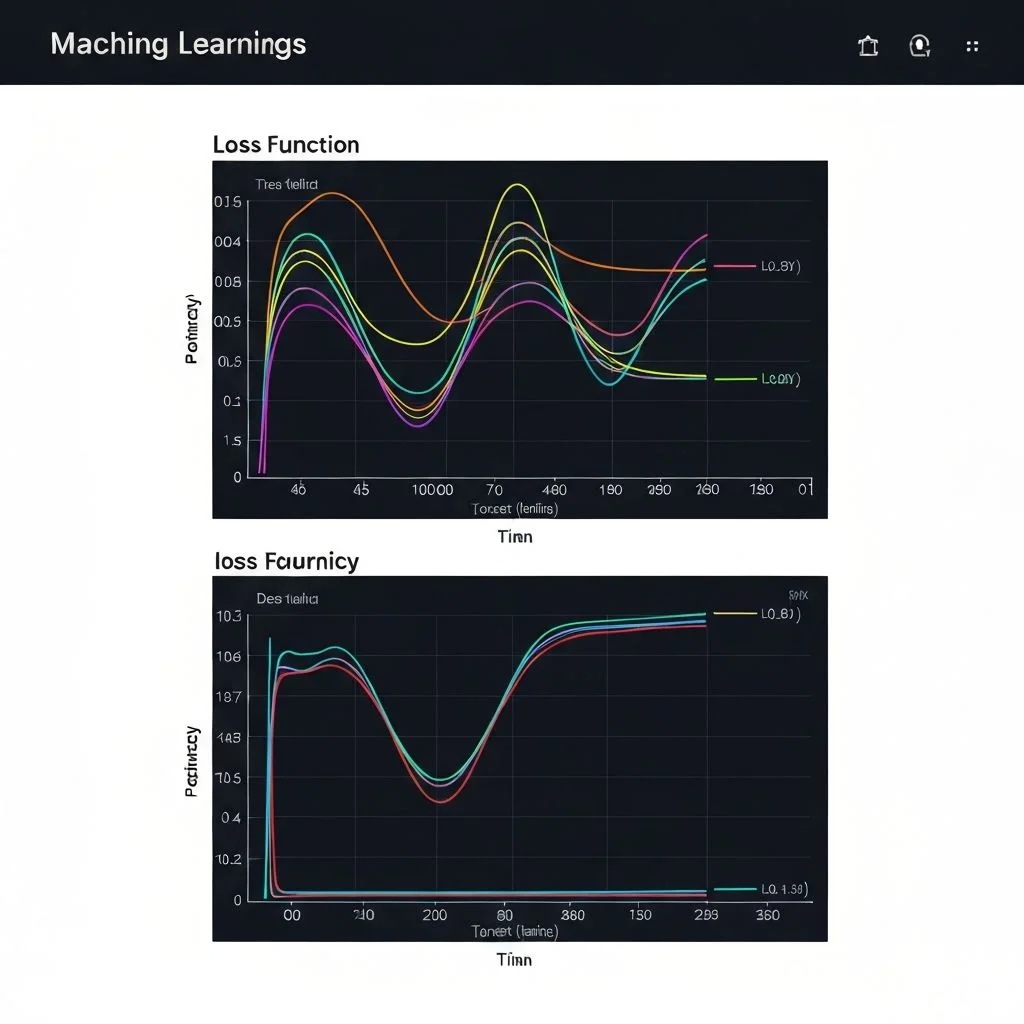

Most debugging workflows for neural networks are blind: you stare at loss curves and guess what the geometry is doing. I wanted to see the network — edges brightening as gradients flow, nodes clustering by activation magnitude, dead neurons greying out.

The result is something between a debugger and a data visualisation. It surfaces intuition that pure metrics hide.

Architecture

Data streams from TensorFlow.js into a worker thread, which keeps the visualisation buttery smooth even when training larger models.

- Train model in TensorFlow.js

- Stream weight matrices over

postMessage - Render D3 force layout with WebGL-backed nodes

- Sync epoch scrubber to the timeline store via

useReducer

Worker isolation

The training loop runs in a SharedWorker so multiple browser tabs can observe the same session without re-running computations. Messages are batched every 50 ms to avoid saturating the main thread.

Feature Highlights

- Animated force-directed graphs with gradient weight edges.

- Epoch timeline scrubber with playback controls.

- Heatmaps for layer activations alongside each node cluster.

- WebGL instancing for fast rendering at scale — 2 000+ nodes stay interactive.

- Export snapshots as SVG or PNG at any epoch.

Implementation

The renderEdge function maps weight magnitude to stroke opacity, so high-gradient connections visually dominate the graph during rapid learning phases.

Tech choices

I tried three renderers before settling on WebGL instancing:

- SVG — readable but collapses past ~300 nodes.

- Canvas 2D — fast enough but no sub-pixel anti-aliasing on edges.

- WebGL instancing — handles 2 000+ nodes at 60 fps with smooth weight interpolation.

Using inline code for API surface: the public hook is useSimulation(modelRef, { fps: 30 }), which returns { nodes, links, epoch, isTraining }.

Lessons learned

- Offloading training to a worker makes the 60 fps target achievable, but serialising large weight tensors is the new bottleneck — sparse delta encoding helped.

- D3's force simulation is not designed for real-time mutation; batching node updates and calling

simulation.nodes(updated)once per frame avoids layout thrashing. - WebGL instancing requires careful attribute buffer management; I ended up writing a small helper that handles resizing and avoids stale buffer references.

Observability shouldn't stop at metrics dashboards — seeing the structure evolve unlocks intuition.

Have thoughts?

Curious what others see or think

Feel free to reach out or leave feedback

Share FeedbackPrefer email? joshuatjhie@pm.me